Designing Better Learning with AI: Why Structured Prompting Matters

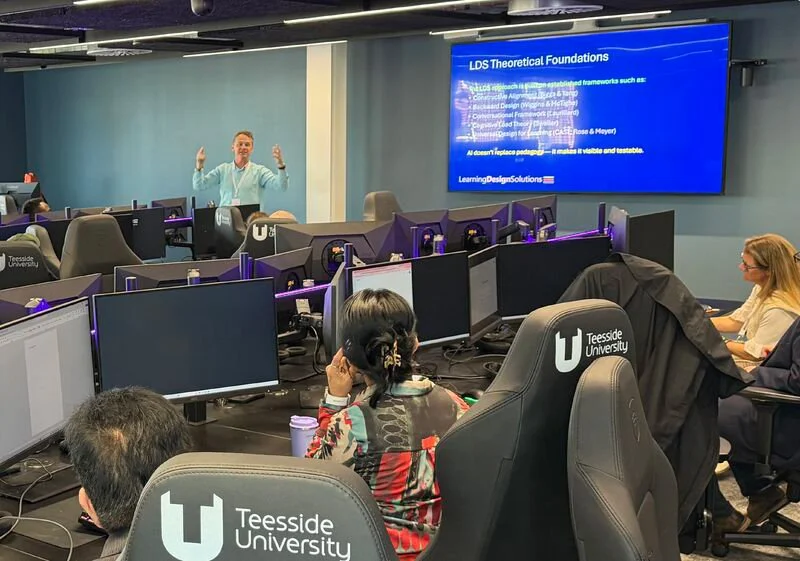

At the recent Future Facing Learning and AI in Higher Education conference at Teesside University, I explored a question that is becoming increasingly important for anyone working in digital education:

How can we use AI to support the design of high-quality learning, rather than simply generate content?

This was the focus of the workshop I ran titled, Prompting as Pedagogical Design: Enabling the Three Voices Model for AI-Enhanced Course Development. This blog brings together some of the key ideas from that session, focusing on one practical takeaway: how to construct effective pedagogic prompts.

A Reflection on Practice

The session itself was highly engaging. There was a strong level of discussion and collaboration within the small groups that formed in the room, with colleagues sharing approaches, testing ideas, and reflecting critically on outputs.

It was particularly encouraging to see that some participants are already working in similar ways — developing and using trained AI agents to support aspects of course design. In many cases, this work is still at an exploratory stage, with colleagues researching and experimenting with how these tools might be applied more systematically.

What was striking, however, was how quickly the introduction of a structured prompt approach began to change the quality of outputs. Several colleagues commented that, when applying this structure to tasks they were already working on, they were able to generate responses with a level of accuracy and depth that was, in some cases, surprising.

At the same time, there was a clear and shared understanding across the room:

AI outputs are not finished products.

They require review, questioning, and iteration. Quality emerges not from the initial response, but from the way it is interrogated and refined through informed, pedagogical judgement.

Prompting as Pedagogical Design

It is easy to think of prompting as a technical skill — a way of getting better outputs from AI systems. But in higher education, this framing is too narrow.

When we design learning, we are not simply producing content. We are making decisions about:

what students need to learn

what they need to do

how they demonstrate achievement

how learning activities prepare them for assessment

These are fundamentally pedagogical decisions.

When AI becomes part of this process, those decisions can no longer remain implicit. The prompt becomes the place where we articulate:

the learning outcome

the level of study

the nature of the activity

the role of disciplinary knowledge

the expected output

In this sense, prompting becomes a form of pedagogical reasoning made visible.

This idea is explored in more depth in a full paper on Prompting as Pedagogical Design, which will be published as part of the conference proceedings at the end of May.

From Instruction to Structure

One of the key takeaways from the session was that effective prompting benefits from structure.

A weak prompt might look like this:

“Write an activity on critical thinking.”

There is no context, no indication of level, no clarity about what students should do, and no sense of how the activity supports learning outcomes or assessment. The result is predictable: generic, decontextualised output.

By contrast, an effective pedagogic prompt operates more like a micro-design brief. It defines:

the context of the module

the intended learning outcome

the learner activity

the disciplinary content

the expected output

and what success looks like

This mirrors the principle of constructive alignment: starting with what students need to achieve and designing learning accordingly.

A better structured prompt may look something like,

“You are supporting the design of an online postgraduate module, Research Methods in Education.

This week focuses on Evaluating Research Evidence and aligns with the learning outcome:

‘Critically appraise and compare methodological approaches in published studies.’ Students are studying asynchronously and have approximately 3–4 hours of study time this week.

Using the supplied material on qualitative vs quantitative validity, generate a short case-based learning activity (around 150 words) in which students evaluate two contrasting studies. Frame the task at the Evaluate level of Bloom’s taxonomy.

Conclude with model feedback explaining why one approach demonstrates stronger methodological rigour.”

A Practical Prompt Structure

To support this, we introduced a simple structure for designing prompts. This was one of the most useful takeaways for participants:

When designing a prompt, include:

Context — module, topic, level, delivery mode, available study time

Learning outcome — what students should be able to do

Learner activity — what students will actually do

Disciplinary content — key concepts, theories, or SME input

Output — what you want the AI to generate

Success criteria — what good looks like

This structure helps ensure that prompts are pedagogically grounded, rather than open-ended or generic.

Prompting as Conversation

Another important shift is to think of prompting not as a one-off instruction, but as a conversation.

Participants quickly discovered that the first output is rarely the final answer. Instead, quality emerges through iteration — refining the prompt, questioning the response, and gradually improving alignment and clarity.

This reflects what we at Learning Design Solutions describe in our work as a the ‘three voice model’, meaningful collaboration between:

the subject-matter expert, ensuring disciplinary rigour

the learning designer, ensuring alignment and structure

the AI, supporting generation and iteration

Each has a role to play, but responsibility for quality remains firmly with the human contributors.

Designing Learning Through Alignment

Perhaps the most important point to emerge from the session is this:

Learning design is not about generating content. It is about designing aligned learning experiences.

When we take a start-from-outcomes approach, learning activities become the central mechanism through which students achieve those outcomes. Content plays a supporting role — it enables students to engage in those activities.

This is where structured prompting becomes particularly valuable.

Because prompting requires us to specify:

the learning outcome

the level of study

what students need to do

and how that prepares them for assessment

…it naturally pushes us towards constructive alignment.

In effect, structured prompting becomes a way of testing alignment in real time. If the prompt is vague, the output exposes gaps in thinking. If the prompt is well-formed, the resulting activity is far more likely to be coherent, purposeful, and appropriately challenging.

This reinforces a key principle:

Learning activities are at the heart of design.

They are where outcomes are realised, skills are developed, and assessment is prepared for. AI can support this process, but only when guided by clear pedagogical intent.

Used in this way, AI is not simply a tool for generating materials — it becomes a tool for strengthening alignment across the design of a course.

Final Thought

AI does not design learning.

People design learning.

What AI offers is a way to make our thinking more explicit — to articulate, test, and refine the decisions that underpin high-quality course design.

The challenge, and the opportunity, is to ensure that this thinking remains pedagogically grounded, critically applied, and aligned to what students actually need to do in order to succeed.

Book a conversation

If you’re exploring how AI can support course design — or looking to scale high-quality online provision without compromising academic standards — I’d be very happy to talk.

You can find out more about our work at Learning Design Solutions and book a consultation here: